Directory

Build your future with Zyngram. A unified platform for social media, e-commerce, recharges, ride-sharing, and rewards – all in one smart hub.

-

Please log in to like, share and comment!

-

11

11 1 Comments0 Shares193 Views0 Reviews

1 Comments0 Shares193 Views0 Reviews -

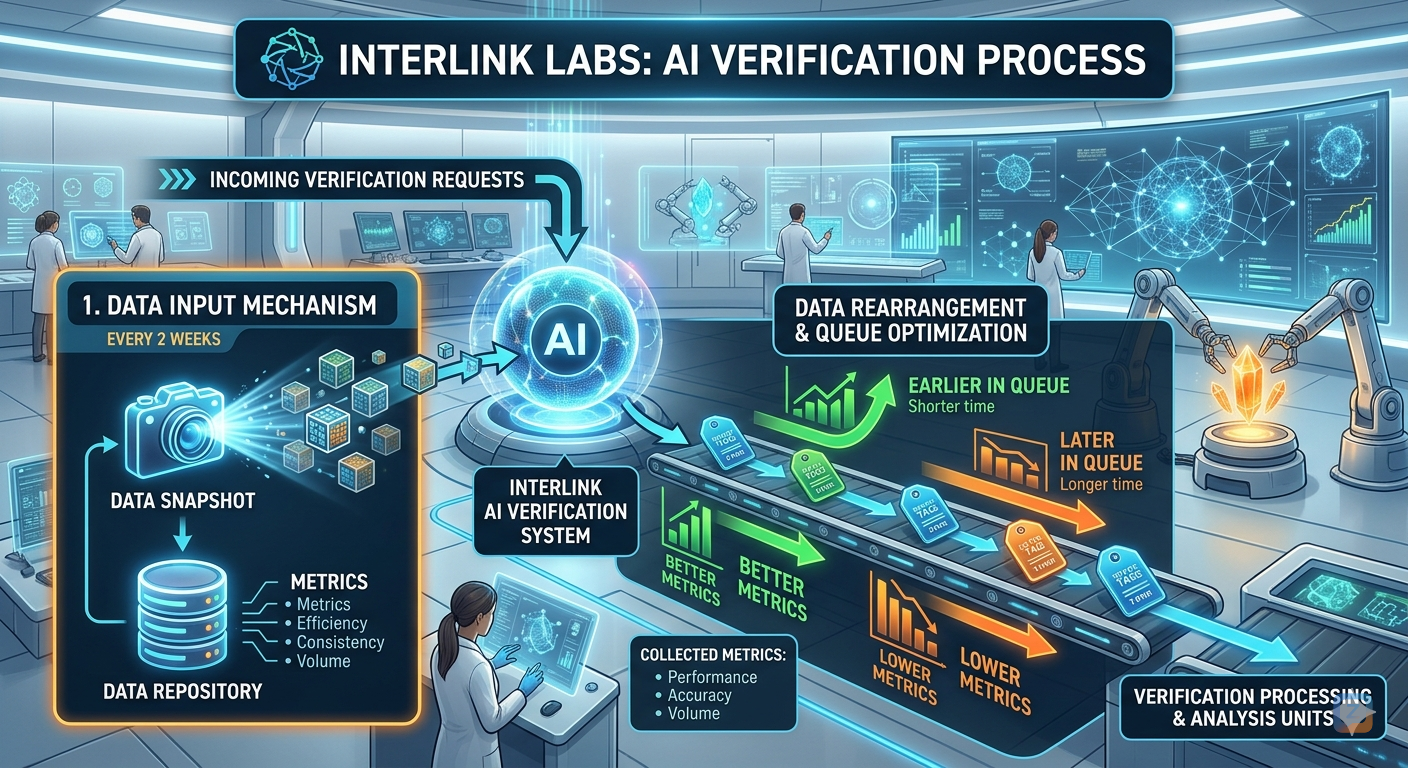

InterLink Labs: AI Verification Workflow

The InterLink Labs AI Verification Process is a bi-weekly, data-driven cycle designed to ensure the highest standards of accuracy and efficiency within the ecosystem. By utilizing a sophisticated rearrangement mechanism, the system prioritizes high-quality data to optimize processing times.

1. Data Input Mechanism

The process begins with a Data Snapshot taken every two weeks. This snapshot captures essential information from the Data Repository, focusing on four key pillars:

Metrics: Raw performance data.

Efficiency: How effectively resources are utilized.

Consistency: The reliability of data over time.

Volume: The total scale of the data being processed.

2. AI Verification System

Incoming verification requests are funneled into the central InterLink AI Verification System. This AI core serves as the primary engine for assessing the validity of the data snapshots against real-world performance and accuracy benchmarks.

3. Data Rearrangement & Queue Optimization

To maximize throughput, the system employs an intelligent queue optimization strategy:

Priority (Earlier in Queue): Nodes or data sets with Better Metrics are moved to the front of the line, resulting in significantly shorter processing times.

Standard (Later in Queue): Data with Lower Metrics are placed further back in the queue, requiring a longer duration for full verification and analysis.

4. Processing & Analysis

In the final stage, optimized data is sent to the Verification Processing & Analysis Units. Here, the collected metrics—specifically Performance, Accuracy, and Volume—undergo final validation to ensure the integrity of the network.

#InterLink #ITLG #ITL

InterLink Labs: AI Verification Workflow The InterLink Labs AI Verification Process is a bi-weekly, data-driven cycle designed to ensure the highest standards of accuracy and efficiency within the ecosystem. By utilizing a sophisticated rearrangement mechanism, the system prioritizes high-quality data to optimize processing times. 1. Data Input Mechanism The process begins with a Data Snapshot taken every two weeks. This snapshot captures essential information from the Data Repository, focusing on four key pillars: Metrics: Raw performance data. Efficiency: How effectively resources are utilized. Consistency: The reliability of data over time. Volume: The total scale of the data being processed. 2. AI Verification System Incoming verification requests are funneled into the central InterLink AI Verification System. This AI core serves as the primary engine for assessing the validity of the data snapshots against real-world performance and accuracy benchmarks. 3. Data Rearrangement & Queue Optimization To maximize throughput, the system employs an intelligent queue optimization strategy: Priority (Earlier in Queue): Nodes or data sets with Better Metrics are moved to the front of the line, resulting in significantly shorter processing times. Standard (Later in Queue): Data with Lower Metrics are placed further back in the queue, requiring a longer duration for full verification and analysis. 4. Processing & Analysis In the final stage, optimized data is sent to the Verification Processing & Analysis Units. Here, the collected metrics—specifically Performance, Accuracy, and Volume—undergo final validation to ensure the integrity of the network. #InterLink #ITLG #ITL 8

8 0 Comments0 Shares205 Views0 Reviews

0 Comments0 Shares205 Views0 Reviews -

27

27 1 Comments0 Shares307 Views0 Reviews

1 Comments0 Shares307 Views0 Reviews -

7

7 0 Comments0 Shares193 Views0 Reviews

0 Comments0 Shares193 Views0 Reviews -

6

0 Comments0 Shares187 Views140 Reviews

0 Comments0 Shares187 Views140 Reviews -

7

0 Comments0 Shares186 Views0 Reviews

0 Comments0 Shares186 Views0 Reviews -

6

0 Comments0 Shares182 Views0 Reviews

0 Comments0 Shares182 Views0 Reviews -

7

7 1 Comments0 Shares184 Views0 Reviews

1 Comments0 Shares184 Views0 Reviews -

Nimmaka Kantharao

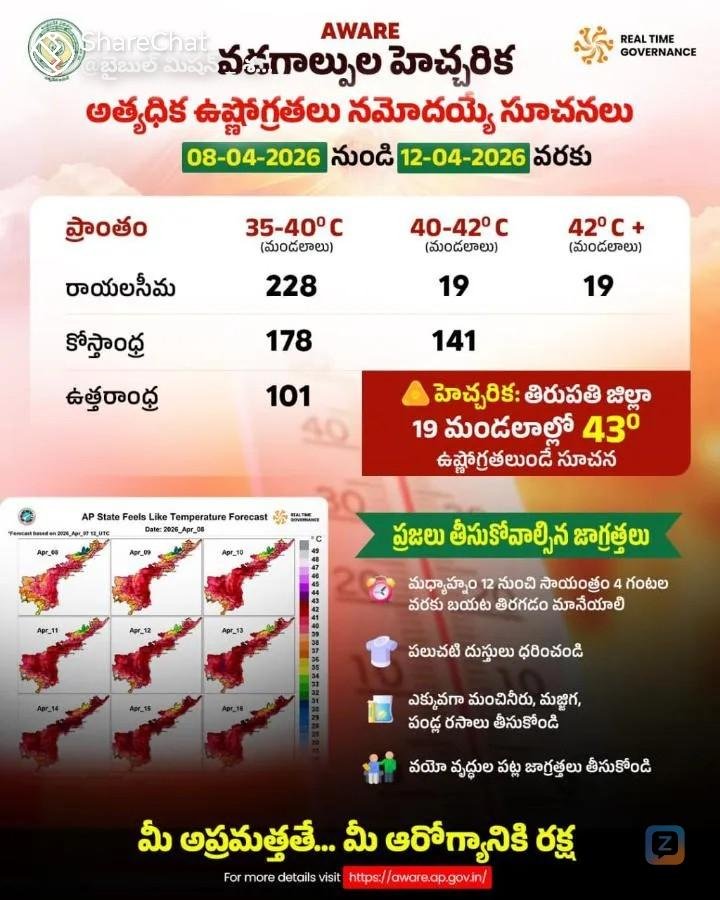

Zyngram Citizen shared Dulipati Raghavulu 's photo POST2026-04-10 16:52:39 - Translate

7

7 0 Comments0 Shares184 Views0 Reviews

0 Comments0 Shares184 Views0 Reviews

Sponsored

Loading...